Dot Product

The inner product or the dot product in a vector space over is a map, that for any 2 vectors there is a real number such that,

- (Linearity Property)

- (Symmetric Property)

- For any , if and only if is the zero vector. ()

The vector space for which the inner product is defined is called an inner product vector space.

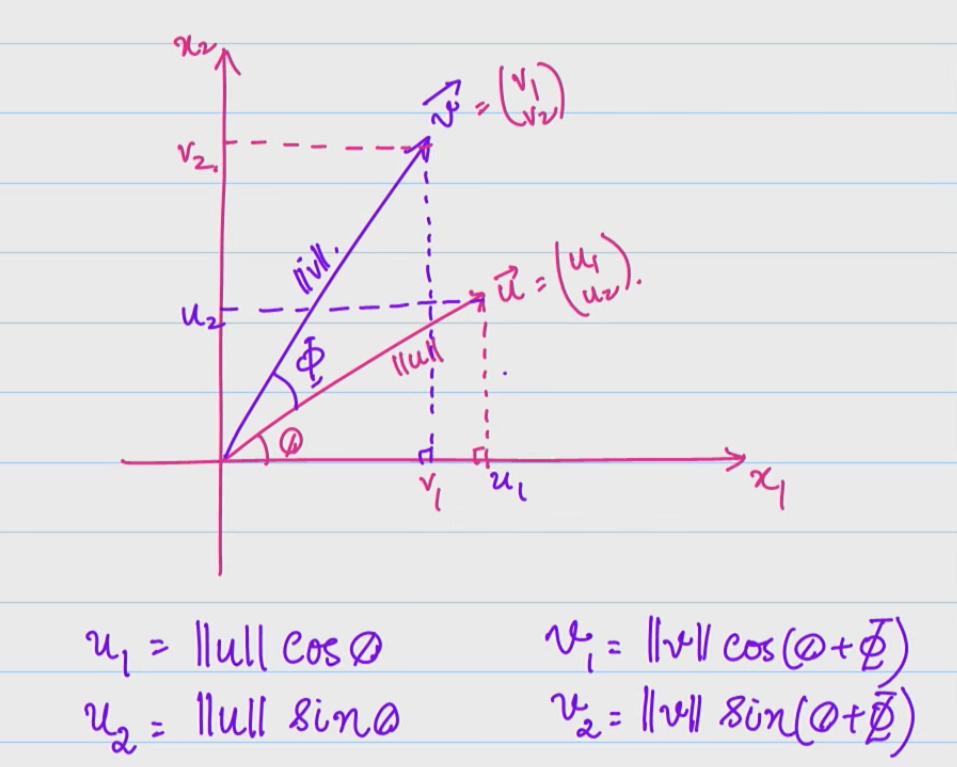

Suppose the two vectors are like this. We can write using trigonometric identities as -

is defined as . So using the above values for this gives us,

Cauchy-Schwarz Inequality

Orthogonal Vectors

If are vectors such that , then we say that and are orthogonal to each other.

- The zero vector is orthogonal to all vectors in any vector space.

- If are non-zero vectors, then can only happen when the angle between is 90.

Let be a set of all vectors orthogonal to every vector in , where is a subspace of the inner product vector space .

Let and -

- The zero vector is always orthogonal to any vector in any vector space. Thus contains the zero vector and is non-empty.

- Because and , we can say

This implies that too. Thus is closed under vector addition. 3. Because , we can say . Consequently this would mean that , and hence . Thus is closed under scalar multiplication.

Hence we can say that is a vector space too as it is closed under vector addition and scalar multiplication and contains the zero vector.

The vector space is called the orthogonal complement of the space .

Orthonormal vectors

A set of vectors is said to be orthonormal if -

- Each vector in the set is orthogonal to every other vector.

- Each vector has a magnitude of 1.

Suppose is a set of orthonormal vectors, what can we say about the dependence/independence of this set?

The set of vectors is independent only when only when . Let,

Similarly we can show that . Thus we can say that every set of orthonormal vectors is linearly independent and can be chosen as the basis for their vector space.

Fourier Expansion

Let be the basis expansion of any vector. We can get the scalars for each basis vector by doing,

Similarly we can find .

Using this knowledge we can rewrite as,

This is called the Fourier Expansion for .

Parseval’s Theorem

We know that .

Orthogonal Projections

A projection of a vector is the best approximation of a vector in a vector space.

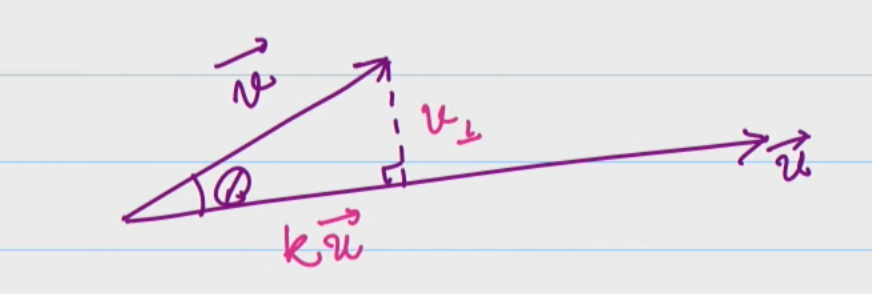

Let be two non-zero vectors in an inner product space . What is the information of available in the direction of ?

We can drop a perpendicular line onto from , where is some real scalar. By vector addition -

As is orthogonal to by design, we can say that it’s orthogonal to as well. So,

Hence we can call the orthogonal project of along the direction of . The above equation can be rewritten as,

Here would be an matrix if is an dimensional vector. It is also called as the outer product of the . This is also known as the projection matrix. Properties of the outer product -

- is a rank-1 matrix as all columns of the outer product would just be multiples of .

- is a symmetric matrix, meaning .

- is an idempotent matrix, meaning .

Gram-Schmidt Process

Let be a -dimensional subspace of a -dimensional vector space where . Let the basis of be and we wish to create orthogonal basis using these.

We can write,

From this can be written as -

In matrix form this could be written as -

If we instead wish to obtain orthonormal basis , we can just substitute . Thus the matrix representation becomes,

To summarize, we start of with a matrix such that its column space spanned all of . Such a matrix can be decomposed into product of two matrices and , where is an orthogonal matrix.

Orthogonal Matrix

A matrix is an orthogonal matrix if -

This means that -

- The columns vectors of the matrix are all of unit length.

- All columns vectors of the matrix are orthogonal to each other.

For orthogonal matrices , which means that .